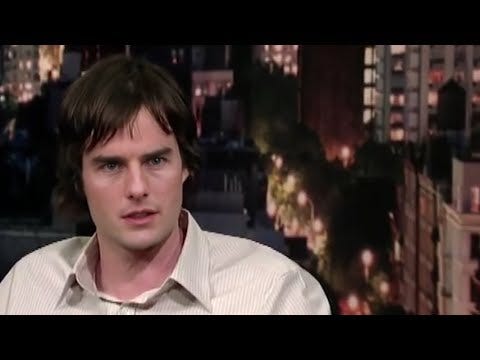

Here’s a stunning video where Bill Hader’s face transforms into Tom Cruise’s and then Seth Rogan’s as he speaks with Letterman.

The images are transformed with machine learning. These videos are known as “deep fakes.” They started off as still images (see Nvidia research here) and were quickly extended to videos in early 2018. As you can see, they have gotten pretty good.

I first encountered deep fakes in early 2018 after seeing a flurry of news reports that reddit was banning deep fake videos transposing celebrity faces into adult videos. At the time, I was working on machine learning products at Google. So my first thought was how can we protect people from fake content about them?

My line of thinking:

These are only going to get better and easier to make

Wherever there’s an incentive to create a fake, somebody will do it (porn is one thing, but imagine faking a world leader saying something inciting to another world leader)

If not stopped, the power of facts will continue to weaken as the average person’s trust in the veracity of any given piece of content is diminished

And when facts and truth lose power, bad things happen

Proving something is real is relatively easy. Each time content is captured, you could create and store some information about that content. Since this is mostly a crypto blog, imagine hashing that video and stamping it to a blockchain. Anybody could then take that content and find when it was originally stamped. You could add various metadata like GPS location and so on for further verification.

So if I took a video of myself eating pineapple pizza and wanted to prove yes I think pineapple tastes good on pizza I’ve always said this in fact I ate some years ago, I could just share a proof that indeed that is a real video of me eating pineapple pizza on that day.

Of course this assumes that the content hasn’t been faked prior to stamping. So you have to trust the entire stack form the nitty gritty bits of your camera all the way to whatever is relaying the information to the blockchain.

Proving that a real thing is real is tractable.

But proving that a fake thing is not real is hard unless every single piece of content goes through this untamperable stamping process—every phone video, CCTV footage, DSLR shot, etc. Perhaps this could happen but it’d take both (a) a huge coordinated effort across industries to make it happen (probably would require every country to make it a law) and (b) users would have to make pretty serious privacy concessions. I know I wouldn’t want every picture or video ever taken of me stored on a blockchain somewhere (even if they’re hashed).

My conclusion then is the same as my conclusion now: we are nearing a point where fake content is indistinguishable from real content and while we ways to prove real things are real, we may never have ways to prove fake things aren’t real. (Though here are some researchers trying to use mice to detect fakes from their audio.)

That’s pretty scary. And I think what ends up happening is we place less weight on photos and recorded videos and more weight on streaming video. If we don’t see something with our own eyes, our first instinct will be to wonder if what we’re being shown is fake.

Lots of implications here. What do you all think will happen? And am I missing a practical way to identify fake things at scale?

An aside: proving fake things are fake is one of the most difficult tasks for the physical collectible goods market too. A friend close to the industry mentioned that shoe manufacturers will do things like put fragrances in the glue to distinguish authentic shoes from fakes. The manufacturer won’t release a full list of little things they do, but will selectively disclose some things to members of the ecosystem (e.g. authenticators working for secondary marketplaces).

You could imagine some sort of digital fingerprinting being added to this list. But part of what makes this work well is the trust model. The manufacturer maintains a secret list. It’s not all open and verifiable. If it were, it’d be easier to make fakes that are truly 1:1. This reduces the likelihood a verifier gets it wrong (labeling a fake as real), but also means they cannot be 100% sure they’re not labeling authentic shoes as fake! (What if one batch was manufactured without the fragrant glue?)